NVIDIA A100 Ampere GPU Launched in PCIe Form Factor, 20 Times Faster Than Volta at 250W & 40 GB HBM2 Memory

NVIDIA A100 Ampere GPU Launched in PCIe Form Factor, 20 Times Faster Than Volta at 250W & 40 GB HBM2 Memory

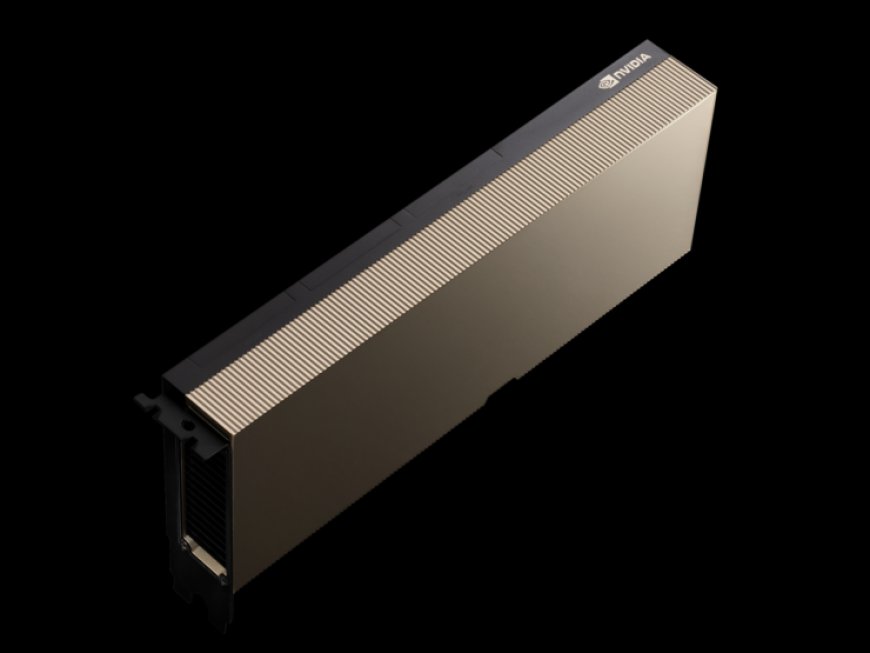

NVIDIA has added a third variant to its growing Ampere A100 GPU family, the A100 PCIe which is PCIe 4.0 compliant and comes in the standard full-length, full height form factor compared to the mezzanine board we got to see earlier.

Just like the Pascal P100 and Volta V100 before it, the Ampere A100 GPU was bound to get a PCIe variant sooner or later. Now NVIDIA has announced that its A100 PCIe GPU accelerator is available for a diverse set of use cases with system ranging from a single A100 PCIe GPU to servers utilizing two cards at the same time through the 12 NVLINK channels that deliver 600 GB/s of interconnect bandwidth.

In terms of specifications, the A100 PCIe GPU accelerator doesn't change much in terms of core configuration. The GA100 GPU retains the specifications we got to see on the 400W variant with 6912 CUDA cores arranged in 108 SM units, 432 Tensor Cores and 40 GB of HBM2 memory that delivers the same memory bandwidth of 1.55 TB/s (rounded off to 1.6 TB/s). The main difference can be seen in the TDP which is rated at 250W for the PCIe variant whereas the standard variant comes with a 400W TDP.

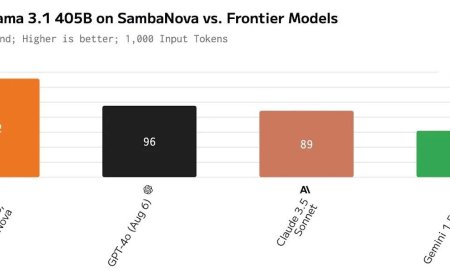

Now we can guess that the card would feature lower clocks to compensate for the less TDP input but NVIDIA has provided the peak compute numbers and those remain unaffected for the PCIe variant. The FP64 performance is still rated at 9.7/19.5 TFLOPs, FP32 performance is rated at 19.5 /156/312 TFLOPs (Sparsity), FP16 performance is rated at 312/624 TFLOPs (Sparsity) & INT8 is rated at 624/1248 TOPs (Sparsity).

According to NVIDIA, the A100 PCIe accelerator can deliver 90% the performance of the A100 HGX card (400W) in top server applications. This is mainly due to the less time it takes for the card to achieve the said tasks however, in complex situations which required sustained GPU capabilities, the GPU can deliver anywhere from up to 90% to down to 50% the performance of the 400W GPU in the most extreme cases. NVIDIA told that the 50% drop will be very rare and only a few tasks can push the card to such extend.

There's a wide scale adoption being made possible already by NVIDIA and its server partners for the said PCIe based GPU accelerator which include:

NVIDIA hasn't announced any release date or pricing for the card yet but considering the A100 (400W) Tensor Core GPU is already being shipped since its launch, the A100 (250W) PCIe will be following its footsteps soon.

What's Your Reaction?