AMD Instinct MI300X & MI300A AI Accelerators Detailed: CDNA 3 & Zen 4 Come Together In An Advanced Packaging Marvel

AMD Instinct MI300X & MI300A AI Accelerators Detailed: CDNA 3 & Zen 4 Come Together In An Advanced Packaging Marvel

The AMD Instinct MI300X & MI300A are some of the most anticipated accelerators in the AI segment which will launch next month. There's a lot of anticipation surrounding AMD's first full-fledged AI masterpiece and today we thought about giving you a roundup of what to expect from this technical marvel.

On the 6th of December, AMD will host its "Advancing AI" keynote where one of the main agendas is to do a full unveiling of the next-gen Instinct accelerator family codenamed MI300. This new GPU and CPU accelerated family will be the lead product of the AI segment which is AMD's No.1 and the most important strategic priority right now as it finally rolls out a product that is not only advanced but also is designed to meet the critical AI requirement within the industry. The MI300 class of AI accelerators will be another chiplet powerhouse, making use of advanced packaging technologies from TSMC so let's see what's under the hood of these AI monsters.

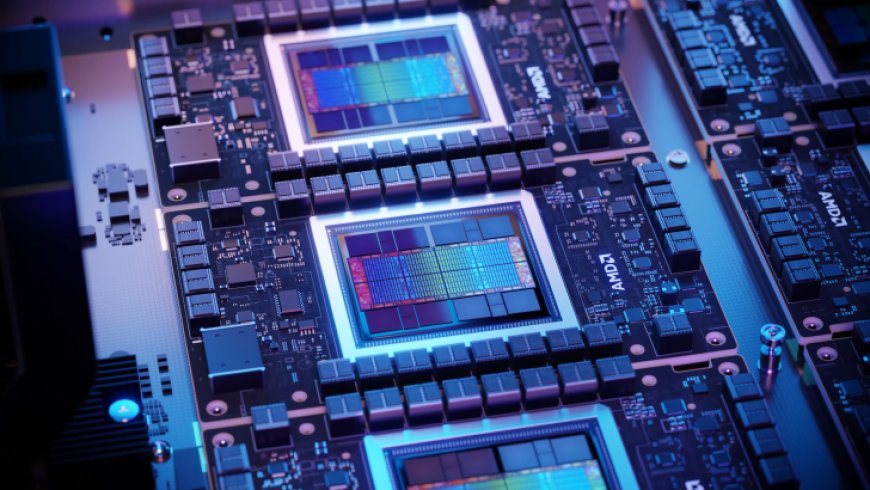

The AMD Instinct MI300X is definitely the chip that will be highlighted the most since it is clearly targeted at NVIDIA's Hopper and Intel's Gaudi accelerators within the AI segment. This chip has been designed solely on the CDNA 3 architecture and there is a lot of stuff going on. The chip is going to host a mix of 5nm and 6nm IPs, all combining to deliver up to 153 Billion transistors (MI300X).

Starting with the design, the main interposer is laid out with a passive die which houses the interconnect layer using a next-gen Infinity Fabric solution. The interposer includes a total of 28 dies which include eight HBM3 packages, 16 dummy dies between the HBM packages, & four active dies and each of these active dies gets two compute dies.

Each GCD based on the CDNA 3 GPU architecture features a total of 40 compute units which equals 2560 cores. There are eight compute dies (GCDs) in total so that gives us a total of 320 Compute & 20,480 core units. For yields, AMD will be scaling back a small portion of these cores and we will be getting more details on exact configurations a month from now.

Memory is another area where you will see a huge upgrade with the MI300X boasting 50% more HBM3 capacity than its predecessor, the MI250X (128 GB). To achieve a memory pool of 192 GB, AMD is equipping the MI300X with 8 HBM3 stacks and each stack is 12-Hi while incorporating 16 Gb ICs which give us 2 GB capacity per IC or 24 GB per stack.

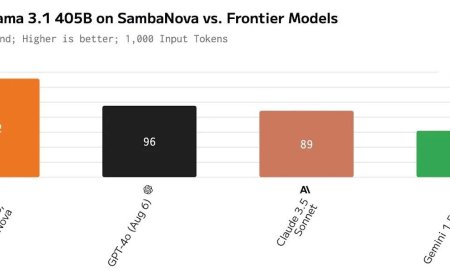

The memory will offer up to 5.2 TB/s of bandwidth and 896 GB/s of Infinity Fabric Bandwidth. For comparison, NVIDIA's upcoming H200 AI accelerator offers 141 GB capacities while Gaudi 3 from Intel will be offering 144 GB capacities. Large memory pools matter a lot in LLMs which are mostly memory bound and AMD can definitely show its AI prowess by leading in the memory department. For comparisons:

In terms of power consumption, the AMD Instinct MI300X is rated at 750W which is a 50% increase over the 500W of the Instinct MI250X and 50W more than the NVIDIA H200.

We have waited for years for AMD to finally deliver on the promise of an Exascale-class APU and the day is nearing as we move closer to the launch of the Instinct MI300A. The packaging on the MI300A is very similar to the MI300X except it makes use of TCO-optimized memory capacities & Zen 4 cores.

One of the active dies has two CDNA 3 GCDs cut out and replaced with three Zen 4 CCDs which offer their own separate pool of cache and core IPs. You get 8 cores and 16 threads per CCD so that's a total of 24 cores and 48 threads on the active die. There's also 24 MB of L2 cache (1 MB per core) and a separate pool of cache (32 MB per CCD). It should be remembered that the CDNA 3 GCDs also have the L2 cache separate.

Rounding up some of the highlighted features of the AMD Instinct MI300 Accelerators, we have:

Bringing all of these together, AMD will work with its ecosystem enablers and partners to offer MI300 AI accelerators in 8-way configurations featuring SXM designs that connect to mainboard with mezzanine connectors. It will be interesting to see what sort of configurations these will be offered within and while SXM boards are a given, we can also expect a few variants in the PCI-E form factors.

One configuration showcased by Gigabyte as part of its G383-R80 rack features a motherboard with four SP5 sockets that are designed to support the Instinct MI300A accelerators. The board features eight PCIe Gen5 x16 slots that can support four dual-slot and four FHFL cards or a total of 12 FHFL cards (4 x16 / 4 x8 speeds).

For now, AMD should know that their competitors are also going full steam ahead on the AI craze with NVIDIA already teasing some huge figures for its 2024 Blackwell GPUs and Intel prepping up its Guadi 3 and Falcon Shores GPUs for launch in the coming years too. One thing is for sure at the current moment, AI customers will gobble up almost anything they can get and everyone is going to take advantage of that. But AMD has a very formidable solution that is not just aiming to be an alternative to NVIDIA but a leader in the AI segment and we hope that MI300 can help them achieve that success.

What's Your Reaction?